Volts, amps, watts and breakers

Discussion

What causes a breaker to trip? Is it purely the amps or is it the total power that it responds to?

We had an issue with the power supply to the factory where I work. The voltage supplied to site was low and was around 210V during peak hours. This would cause issues where motors would trip and our EV charger would cut out (meaning the directors cars wouldn't charge...).

We have a 2000Amp supply and previously when at full production the max draw on the incoming meter showed 1950Amps, which has always been on the mind as it's a little close for comfort.

The voltage issues were cured by changing the tappings on our transformer and it now sits a far healthier 235V, the issues have gone away and we are all good now.

While we haven't gone to full production since the change (we produce fast moving seasonal consumables product) the current values are lower than I am used to seeing on the meter - which is understandable due to Ohms law. (Power the same, volts up, Amps down etc)

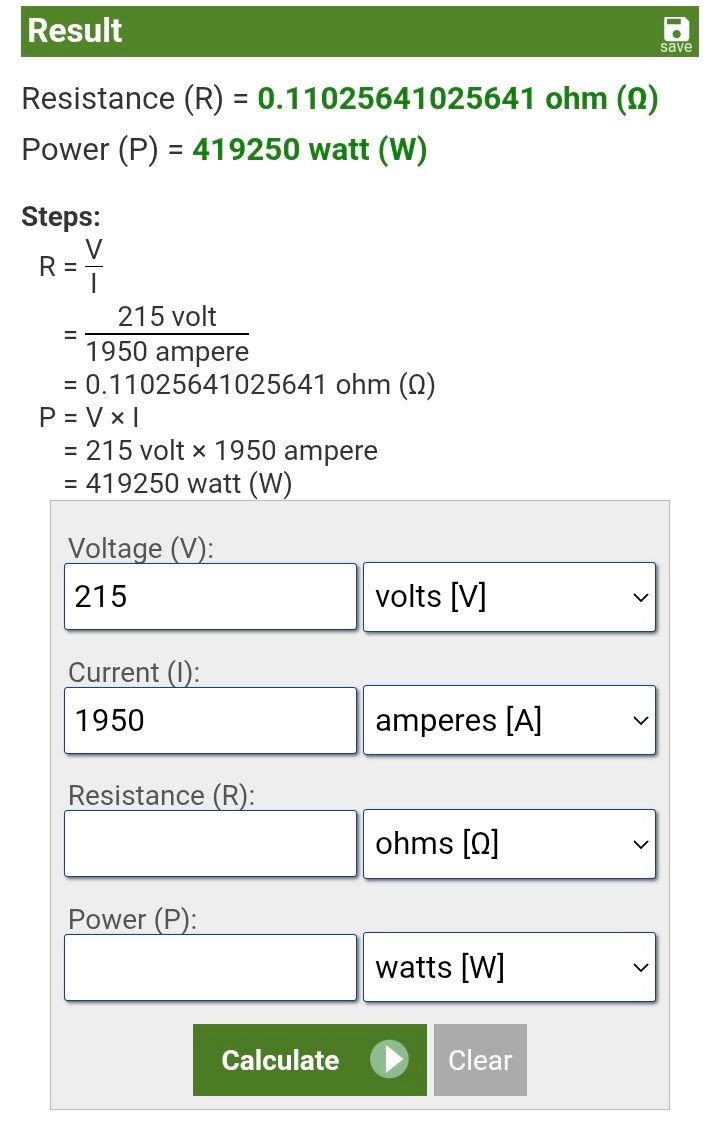

So just out of interest I used an online calculator (as my maths isn't the most reliable) to work out the power consumed before the transformer adjustments:

This gives a power figure of about 420kW

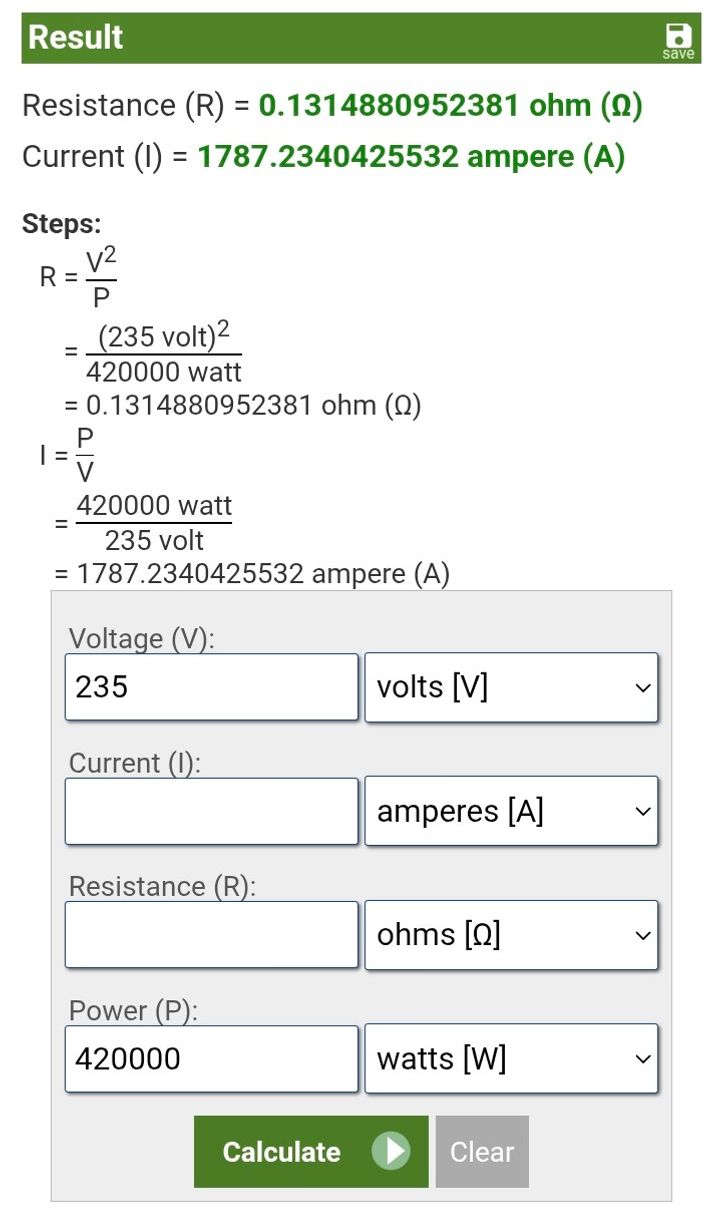

If I then use that power with the new increased voltage:

It suggests that the new current at maximum consumption will be 1790Amps which is about 160Amps less, which is good, that means that there increased buffer between the max consumption and the incoming breaker size.

But I started considering what actually causes the breaker to trip, I know they are activated thermally, they over heat and "pop" - open circuit.

I have always understood that is a result of the current/resistance (hence long distance transmission is done at very high voltages to deliver the same power).

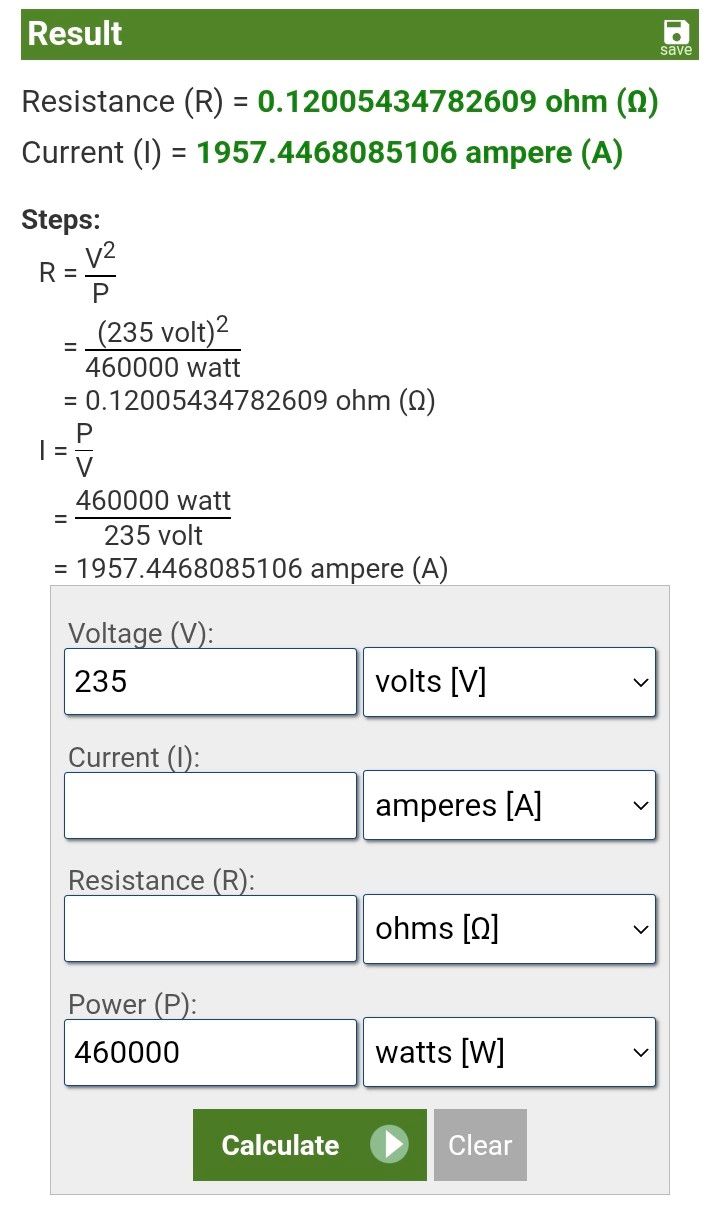

Then I thought, well if the voltage is higher and resistance hasn't changed. what would the power consumption be if the current were the same 1950Amps:

So if the voltage is now 235 and the current draw was still 1950 then the total power is now 460kW.

This implies to me that we could use an additional 40kW of power and still being within the limits of our main incoming breaker and distribution equipment.

Am I missing anything, or got this drastically wrong?

Basically the question is if the breaker would actually care about an additional 40kW?

Surely if the current is within its current rating then that's all that matters?

We had an issue with the power supply to the factory where I work. The voltage supplied to site was low and was around 210V during peak hours. This would cause issues where motors would trip and our EV charger would cut out (meaning the directors cars wouldn't charge...).

We have a 2000Amp supply and previously when at full production the max draw on the incoming meter showed 1950Amps, which has always been on the mind as it's a little close for comfort.

The voltage issues were cured by changing the tappings on our transformer and it now sits a far healthier 235V, the issues have gone away and we are all good now.

While we haven't gone to full production since the change (we produce fast moving seasonal consumables product) the current values are lower than I am used to seeing on the meter - which is understandable due to Ohms law. (Power the same, volts up, Amps down etc)

So just out of interest I used an online calculator (as my maths isn't the most reliable) to work out the power consumed before the transformer adjustments:

This gives a power figure of about 420kW

If I then use that power with the new increased voltage:

It suggests that the new current at maximum consumption will be 1790Amps which is about 160Amps less, which is good, that means that there increased buffer between the max consumption and the incoming breaker size.

But I started considering what actually causes the breaker to trip, I know they are activated thermally, they over heat and "pop" - open circuit.

I have always understood that is a result of the current/resistance (hence long distance transmission is done at very high voltages to deliver the same power).

Then I thought, well if the voltage is higher and resistance hasn't changed. what would the power consumption be if the current were the same 1950Amps:

So if the voltage is now 235 and the current draw was still 1950 then the total power is now 460kW.

This implies to me that we could use an additional 40kW of power and still being within the limits of our main incoming breaker and distribution equipment.

Am I missing anything, or got this drastically wrong?

Basically the question is if the breaker would actually care about an additional 40kW?

Surely if the current is within its current rating then that's all that matters?

Edited by Buzz84 on Wednesday 18th March 23:28

Yeah depending on the protection device, it will generally only care about current.

So you could theoretically add additional load to your system at the new voltage, but as above, you don't have the headroom with your supply.

I'd be content that you're now running everything at 230Vac where it's much happier and leave it at that.

So you could theoretically add additional load to your system at the new voltage, but as above, you don't have the headroom with your supply.

I'd be content that you're now running everything at 230Vac where it's much happier and leave it at that.

Simpo Two said:

Too much thinking. Just turn the knob up until it goes ping, mark the position, put it back in and carry on.

That's one way, Crank it up find out and knock it down one notch.

Gary29 said:

Yeah depending on the protection device, it will generally only care about current.

So you could theoretically add additional load to your system at the new voltage, but as above, you don't have the headroom with your supply.

I'd be content that you're now running everything at 230Vac where it's much happier and leave it at that.

Yes that's the main thinking, all is better and happier now but then I noticed that the consumption on the meters just seemed lower, which then got me thinking about the science behind it all. So you could theoretically add additional load to your system at the new voltage, but as above, you don't have the headroom with your supply.

I'd be content that you're now running everything at 230Vac where it's much happier and leave it at that.

We are doing a couple of changes. Swapping out 2x 18kw air compressors for 1x 37kw

(the newer 18kw is staying as backup in case of issue and for when servicing is needed but shouldn't run otherwise)

We are also getting a 40ft refrigerated container - that should be low power as it only needs to maintain temp as anything that goes in in it is already frozen.

The above has been arranged/calculated via our electrical contractors. It was going to be close before the voltage adjustment but now thats been done the net effect is that our headroom is still better than it was before the adjustment.

Mr Pointy said:

Are your loads resistive? Inductive? What's the overall power factor of the installation?

If your loads are resistive then the power/current drawn will go up as the voltage goes up. If they aren't resistive then something different might happen.

Inductive all the way, VFFS filling machines, conveyor belts, motors, pumps, fans.If your loads are resistive then the power/current drawn will go up as the voltage goes up. If they aren't resistive then something different might happen.

The packing machines work by heat sealing, so they have resistive heaters, but that's drop in the consumption ocean as the majority of power consumed is by 7 x 150hp motors (~190amps each)

Power factor, would have to look as that's not my area of expertise. (As mentioned above have some very good contractors we call on for that) Pretty sure it's auto adjusts and I think I have seen 0.95 on the screen.

Gassing Station | Science! | Top of Page | What's New | My Stuff