Tesla to replace parking sensors with vision

Discussion

gangzoom said:

annodomini2 said:

They're all flawed in certain ways, it's the combination that's important.

Focus on optical gives you no backup, humans predominantly use sight, but it's not our only sense.

I didn't realise the DVLA allows you to drive if you are blind? What other sense do you have that allows you drive if you cannot see? Focus on optical gives you no backup, humans predominantly use sight, but it's not our only sense.

You listen to what is going on around you, an obvious example is emergency sirens.

You feel the motion of the car with touch and inertia.

Computers have the advantage of not being constrained by biology so adding other information sources increases their capability.

budgie smuggler said:

Hans_Gruber said:

Because having 2 systems, in the split second a decision has to be made, causes confusion. Computers, like humans, have reaction times.

Radar is incredibly basic and the field of view very limited. Assuming the camera and recognition software works, it is far advanced. Other manufacturers only rely on radar, that doesn’t mean it is better!

If we are referencing human senses - ever notice how the very best engineering solutions take their inspiration from nature? Submarines copy whales, air turbine blades copy whale fins, racing boats copy shark skin - there are many, many others. Im not David Attenborough but to my knowledge only one animal* (above water) uses radar to see. They use eyes! By switching to cameras with recognition software, as this evolves, will easily

out perform radar.

(Bats don’t even use the same basic radar cars use, they use echolocation, not radio waves that are subject to interference. They also have very limited vision for the same reason why radar has been disabled on your Tesla)

I thought Tesla's (now removed) parking sensors were ultrasonic (i.e. echo-location) rather than RADAR?Radar is incredibly basic and the field of view very limited. Assuming the camera and recognition software works, it is far advanced. Other manufacturers only rely on radar, that doesn’t mean it is better!

If we are referencing human senses - ever notice how the very best engineering solutions take their inspiration from nature? Submarines copy whales, air turbine blades copy whale fins, racing boats copy shark skin - there are many, many others. Im not David Attenborough but to my knowledge only one animal* (above water) uses radar to see. They use eyes! By switching to cameras with recognition software, as this evolves, will easily

out perform radar.

(Bats don’t even use the same basic radar cars use, they use echolocation, not radio waves that are subject to interference. They also have very limited vision for the same reason why radar has been disabled on your Tesla)

I don't doubt optical processing can in theory be better than ultrasonic sensors but the thing is, ultrasonics are so simple to implement. All you need to do is time the receiving of a reflected signal and use that delay to change the tone: done.

Whereas with an optical system, you need binocular vision to process depth, the ability to see in pitch black/bright sunlight/dazzling reflections from shop windows/puddles, condensation on the lens, fog etc etc.

PS. IIRC shrews also use echolocation.

Due to this the min range to car Infront has been increased as it is attempting to use just the camera interface, but the reaction time is slower as it is having to process the visual information.

Given that a camera + image recognition can replace ultrasound parking sensors, rain detection, radar within the range the conditions determine you should be using it (i.e. not beyond the distance you can see) and it is necessary anyway to do hazard detection, driver assistance, then it is obviously better to simplify your design and concentrate on using cameras. It'll eventually save money and it'll be a better product. Doing more with less is always a good idea, and cameras can also do a significantly better job of detecting rain and spotting obstructions when parking than the previous generation of technology.

Clearly selling a product that is missing features becoz your haven't got the new version working yet is complete bulls t, but that doesn't have any bearing on whether the design change itself is good idea or not.

t, but that doesn't have any bearing on whether the design change itself is good idea or not.

Clearly selling a product that is missing features becoz your haven't got the new version working yet is complete bulls

t, but that doesn't have any bearing on whether the design change itself is good idea or not.

t, but that doesn't have any bearing on whether the design change itself is good idea or not.ATG said:

Given that a camera + image recognition can replace ultrasound parking sensors, rain detection, radar within the range the conditions determine you should be using it (i.e. not beyond the distance you can see) and it is necessary anyway to do hazard detection, driver assistance, then it is obviously better to simplify your design and concentrate on using cameras. It'll eventually save money and it'll be a better product. Doing more with less is always a good idea, and cameras can also do a significantly better job of detecting rain and spotting obstructions when parking than the previous generation of technology.

Clearly selling a product that is missing features becoz your haven't got the new version working yet is complete bulls t, but that doesn't have any bearing on whether the design change itself is good idea or not.

t, but that doesn't have any bearing on whether the design change itself is good idea or not.

I don't think it is 'obviously better', you're replacing simple, reliable and well understood systems with extremely complex software. Clearly selling a product that is missing features becoz your haven't got the new version working yet is complete bulls

t, but that doesn't have any bearing on whether the design change itself is good idea or not.

t, but that doesn't have any bearing on whether the design change itself is good idea or not.I've been in a few Teslas now and seen software glitches with my own eyes (e.g. other vehicles not being shown in the correct place on the display, incorrect type of vehicle being detected, rain detection making the wipers go like the clappers in light drizzle then carrying on when the screen is bone dry).

ATG said:

Clearly selling a product that is missing features becoz your haven't got the new version working yet is complete bulls t, but that doesn't have any bearing on whether the design change itself is good idea or not.

t, but that doesn't have any bearing on whether the design change itself is good idea or not.

That used to be called vapourware! t, but that doesn't have any bearing on whether the design change itself is good idea or not.

t, but that doesn't have any bearing on whether the design change itself is good idea or not.If the product doesn't work it's not really a product. I don't really understand how Tesla gets away with developing its product while in customers hands rather than the lab.

budgie smuggler said:

ATG said:

Given that a camera + image recognition can replace ultrasound parking sensors, rain detection, radar within the range the conditions determine you should be using it (i.e. not beyond the distance you can see) and it is necessary anyway to do hazard detection, driver assistance, then it is obviously better to simplify your design and concentrate on using cameras. It'll eventually save money and it'll be a better product. Doing more with less is always a good idea, and cameras can also do a significantly better job of detecting rain and spotting obstructions when parking than the previous generation of technology.

Clearly selling a product that is missing features becoz your haven't got the new version working yet is complete bulls t, but that doesn't have any bearing on whether the design change itself is good idea or not.

t, but that doesn't have any bearing on whether the design change itself is good idea or not.

I don't think it is 'obviously better', you're replacing simple, reliable and well understood systems with extremely complex software. Clearly selling a product that is missing features becoz your haven't got the new version working yet is complete bulls

t, but that doesn't have any bearing on whether the design change itself is good idea or not.

t, but that doesn't have any bearing on whether the design change itself is good idea or not.I've been in a few Teslas now and seen software glitches with my own eyes (e.g. other vehicles not being shown in the correct place on the display, incorrect type of vehicle being detected, rain detection making the wipers go like the clappers in light drizzle then carrying on when the screen is bone dry).

Edited to add that a competent hobbyist could knock something up using a raspberry pi, a camera module and some open source artificial neural network software. I remember seeing a youtube video where someone used that basic kit to aim and fire a water pistol at squirrels approaching a bird table, which is a very similar class of problem in terms of the software.

Edited by ATG on Tuesday 22 November 15:24

egomeister said:

ATG said:

Clearly selling a product that is missing features becoz your haven't got the new version working yet is complete bulls t, but that doesn't have any bearing on whether the design change itself is good idea or not.

t, but that doesn't have any bearing on whether the design change itself is good idea or not.

That used to be called vapourware! t, but that doesn't have any bearing on whether the design change itself is good idea or not.

t, but that doesn't have any bearing on whether the design change itself is good idea or not.If the product doesn't work it's not really a product. I don't really understand how Tesla gets away with developing its product while in customers hands rather than the lab.

k you very much for the money."

k you very much for the money."ATG said:

Recognising rain on a window or the way an image is distorted by a layer of water isn't extremely complex software. It is exactly the kind of pattern recognition and categorisation that ANNs are really good at. Similarly spotting nearby objects in moving binocular images (i.e. the view you'd get as you reverse towards a bollard) is also simple. The bollards naturally stand out from the background because they move very differently. In particular, the computational burden is mainly in training the ANNs ...i.e. the process where you're calibrating the system before you deploy it into the cars. Once they're trained, using them is not very computationally intensive; i.e. they answer the question "is this windscreen wet?" without having to crunch too many numbers and they crunch the same amount of numbers each time they're asked the question, so it's a reliable, stable algorithm.

Edited to add that a competent hobbyist could knock something up using a raspberry pi, a camera module and some open source artificial neural network software. I remember seeing a youtube video where someone used that basic kit to aim and fire a water pistol at squirrels approaching a bird table, which is a very similar class of problem in terms of the software.

It's not simple, not at all. Like I said, compare it to an ultrasonic parking sensor or infrared rain sensor, it's orders of magnitude more complex.Edited to add that a competent hobbyist could knock something up using a raspberry pi, a camera module and some open source artificial neural network software. I remember seeing a youtube video where someone used that basic kit to aim and fire a water pistol at squirrels approaching a bird table, which is a very similar class of problem in terms of the software.

Edited by ATG on Tuesday 22 November 15:24

Put it this way, my colleague on the desk opposite has a 20 plate Model 3 performance. I just asked him how well the automatic wipers work, his response "Yeah, it's not too bad since the last update but it did start wiping my windscreen when it was dry the other day". I.e. It's taken Tesla's software department more than one go at fixing it, and it's still not very good.

If it was as simple as you imply, they'd have it licked already.

Edited by budgie smuggler on Tuesday 22 November 15:46

ATG said:

Recognising rain on a window or the way an image is distorted by a layer of water isn't extremely complex software. It is exactly the kind of pattern recognition and categorisation that ANNs are really good at. Similarly spotting nearby objects in moving binocular images (i.e. the view you'd get as you reverse towards a bollard) is also simple. The bollards naturally stand out from the background because they move very differently. In particular, the computational burden is mainly in training the ANNs ...i.e. the process where you're calibrating the system before you deploy it into the cars. Once they're trained, using them is not very computationally intensive; i.e. they answer the question "is this windscreen wet?" without having to crunch too many numbers and they crunch the same amount of numbers each time they're asked the question, so it's a reliable, stable algorithm.

Interesting that you've used the wipers as an example. They are the worst automatic wipers I have ever seen, by a massive margin. When it's dry they sometimes go at full speed. In light rain when the visibility gradually decreases they just don't come on, at least I've never had the courage (or stupidity) to let the visibility get bad enough before I trigger them manually. They are shockingly, dangerously bad, even more so as the wiper controls (except single sweep and wash) are buried in the on screen menus.Edited by ATG on Tuesday 22 November 15:24

If the parking sensor replacement is as 'good', all the body shop managers will be rubbing their hands in anticipation of the amount of work that's coming their way.

I believe the fundamental problem with removing the parking sensors, is the cameras have loads of blind spots around the car. This is all very well when you’re moving, as pointed out above, a binocular image can be used to work out where things are. The problem with this, is that when the car is parked, things in the cameras blinds spot can change and there’s no way the car can know about it until it moves.

Chances are it can then move into something. Yes, the driver should be aware and use their own eyes, but the point of parking sensors in the first place was to augment the drivers eyes for the areas around the car that they couldn’t see.

But the people at Tesla are obviously smarter than me, so I’m sure it’ll be fine. Just like the wipers are fine. And the cruise control that panics at trucks.

My Tesla is a great EV, but a s t car. BMW i4 next.

t car. BMW i4 next.

Chances are it can then move into something. Yes, the driver should be aware and use their own eyes, but the point of parking sensors in the first place was to augment the drivers eyes for the areas around the car that they couldn’t see.

But the people at Tesla are obviously smarter than me, so I’m sure it’ll be fine. Just like the wipers are fine. And the cruise control that panics at trucks.

My Tesla is a great EV, but a s

t car. BMW i4 next.

t car. BMW i4 next. I'm not a Tesla Owner, but a VW Caddy with just camera for reversing, my thoughts are

Totally miss parting sensors, not always looking at the screen when reversing, but always listening, already had one minor bump when not looking at the screen.

Very difficult to judge distance when reversing to a wall, post, another car, as all at different heights.

The camera can very dirty this time of year, needs regular wiping.

I'm going to retro fit reversing sensors, miss them so much, just my 2 pennys worth.

Totally miss parting sensors, not always looking at the screen when reversing, but always listening, already had one minor bump when not looking at the screen.

Very difficult to judge distance when reversing to a wall, post, another car, as all at different heights.

The camera can very dirty this time of year, needs regular wiping.

I'm going to retro fit reversing sensors, miss them so much, just my 2 pennys worth.

budgie smuggler said:

ATG said:

Recognising rain on a window or the way an image is distorted by a layer of water isn't extremely complex software. It is exactly the kind of pattern recognition and categorisation that ANNs are really good at. Similarly spotting nearby objects in moving binocular images (i.e. the view you'd get as you reverse towards a bollard) is also simple. The bollards naturally stand out from the background because they move very differently. In particular, the computational burden is mainly in training the ANNs ...i.e. the process where you're calibrating the system before you deploy it into the cars. Once they're trained, using them is not very computationally intensive; i.e. they answer the question "is this windscreen wet?" without having to crunch too many numbers and they crunch the same amount of numbers each time they're asked the question, so it's a reliable, stable algorithm.

Edited to add that a competent hobbyist could knock something up using a raspberry pi, a camera module and some open source artificial neural network software. I remember seeing a youtube video where someone used that basic kit to aim and fire a water pistol at squirrels approaching a bird table, which is a very similar class of problem in terms of the software.

It's not simple, not at all. Like I said, compare it to an ultrasonic parking sensor or infrared rain sensor, it's orders of magnitude more complex.Edited to add that a competent hobbyist could knock something up using a raspberry pi, a camera module and some open source artificial neural network software. I remember seeing a youtube video where someone used that basic kit to aim and fire a water pistol at squirrels approaching a bird table, which is a very similar class of problem in terms of the software.

Edited by ATG on Tuesday 22 November 15:24

Put it this way, my colleague on the desk opposite has a 20 plate Model 3 performance. I just asked him how well the automatic wipers work, his response "Yeah, it's not too bad since the last update but it did start wiping my windscreen when it was dry the other day". I.e. It's taken Tesla's software department more than one go at fixing it, and it's still not very good.

If it was as simple as you imply, they'd have it licked already.

Edited by budgie smuggler on Tuesday 22 November 15:46

ATG said:

The "traditional" automatic wiper system on my car often fails to respond to rain quickly enough ...so what do we conclude? Its design is fundamentally flawed? Its engineering is overly complex?

No, I conclude that you have the sensitivity turned down too low, or your windscreen was too dirty, or your sensor wasn't fitted properly at the factory, or the car has had a replacement screen at some point and the sensor wasn't aligned properly, or there's a manufacturing fault in the sensor etc etc.I can make that assumption as I've had enough cars with this now (and they're mostly cheap ones) to know this technology is basically sorted already. Occasionally you need to do an extra manually-initiated wipe in intermittent conditions. But it will never sit there continually wiping a dry screen. It will never go full pelt when it's just spitting.

Honestly I do take your point, but just go back through this thread and read the owners experiences of how well Vision based systems work in the real world. Like the first post which talks about Vision based cruise control, or the recent one from RichardM5 about the wipers ("they are the worst automatic wipers I have ever seen, by a massive margin").

It's all well and good talking about some hobbyist knocking up a squirrel recognition tool over a weekend that has to do a single job and work in one specific environment, on one specific day they made their YT video, but that's very different to making software that can do that job in California, Scotland, Norway, day, night, in fog, mist, drizzle, when being dazzled by reflections etc.

Put it this way: Tesla have a pretty decent sized software development department who do this stuff all day every day and they still can't make it work well enough. I think it's much much harder than you make out.

Edited by budgie smuggler on Wednesday 23 November 10:25

ATG said:

Recognising rain on a window or the way an image is distorted by a layer of water isn't extremely complex software. It is exactly the kind of pattern recognition and categorisation that ANNs are really good at. Similarly spotting nearby objects in moving binocular images (i.e. the view you'd get as you reverse towards a bollard) is also simple. The bollards naturally stand out from the background because they move very differently. In particular, the computational burden is mainly in training the ANNs ...i.e. the process where you're calibrating the system before you deploy it into the cars. Once they're trained, using them is not very computationally intensive; i.e. they answer the question "is this windscreen wet?" without having to crunch too many numbers and they crunch the same amount of numbers each time they're asked the question, so it's a reliable, stable algorithm.

Edited to add that a competent hobbyist could knock something up using a raspberry pi, a camera module and some open source artificial neural network software. I remember seeing a youtube video where someone used that basic kit to aim and fire a water pistol at squirrels approaching a bird table, which is a very similar class of problem in terms of the software.

You miss the fact that the cameras are right next to the windscreen and the focal lens means they effectively don’t see anything on the glass except extremes. It’s basic optics, ask a serious photographer or try it yourself, stand by your house window when it’s raining and photograph something in your garden, the chances are you’d not see any rain on the glass . In fact the cameras in these things are design to not see minor blemishes otherwise a dirt or a small stone chip would render the cameras useless. You’d set up the optics to minimise the risk of that. Edited to add that a competent hobbyist could knock something up using a raspberry pi, a camera module and some open source artificial neural network software. I remember seeing a youtube video where someone used that basic kit to aim and fire a water pistol at squirrels approaching a bird table, which is a very similar class of problem in terms of the software.

Edited by ATG on Tuesday 22 November 15:24

Secondly, tesla don’t use binocular vision. The 3 forward cameras all have different focal lengths. It would be great if they did have binocular vision as it would solve several issues including proper depth perception and an element of redundancy. They have also admitted, after 6 years of trying, that even stitching the edge of images together is proving very difficult.

Happy day scenario, it kind of works in a Tesla. The thing that’s going to prevent level 3 or above driving is the unpredictable failures, hitting a bug fat bee leaving a sponge across the camera, light artefacts blinding cameras (a commonly reported issue now). etc. Musk wants L4, but there’s no chance with the sensor suite on the cars even if the cars could drive if everything was perfect, simply because things won’t be perfect.

As for parking sensors, well many said we drive using our eyes, why can’t the car with cameras. Well, if we want to take the evolution biological argument, why do we have nerves in our finger tips? If I’m picking something up surely my eye sight tells me if I’m holding it? Maybe it’s because we can’t always see what we’re picking up, just like you can’t always see what you’re pulling up to in the car. When you’re parking, why not have a sensor for the last 30 cm just like we have nerves that can sense heat, feel touch, texture and pressure to fine tune the detail?

Apart from all that, jobs a good one.

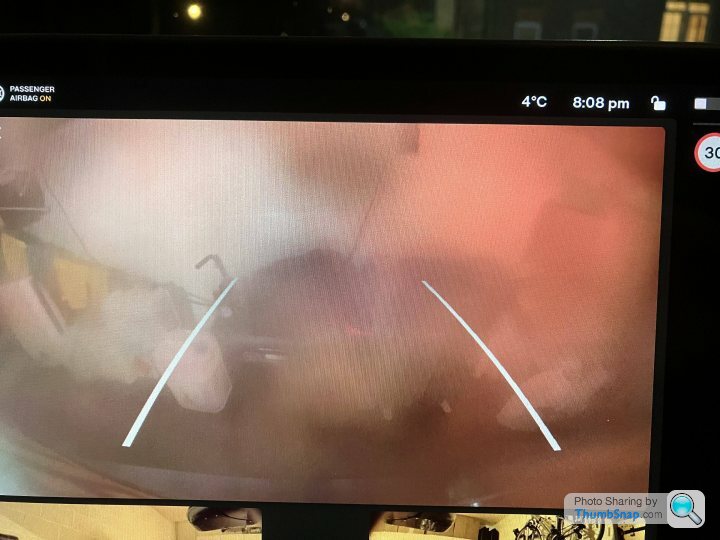

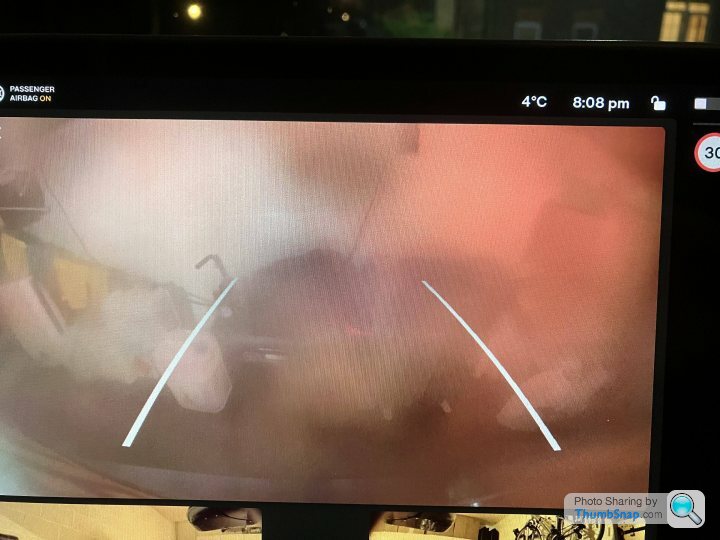

Maybe Elon would like to explain how, given that the camera that's used to control the wipers can't distinguish when you can actually see out of the windscreen and when you can't, the rear view camera could possibly be better than traditional parking sensors when the visibility is like this :

That was after a short drive of less than 5 miles after cleaning the camera before hand, reversing into a well lit garage.

Reversing into an unlit space after a long drive at night in the winter when the roads are damp and salty; good luck with that.

That was after a short drive of less than 5 miles after cleaning the camera before hand, reversing into a well lit garage.

Reversing into an unlit space after a long drive at night in the winter when the roads are damp and salty; good luck with that.

annodomini2 said:

Register1 said:

z4RRSchris said:

says cars after october 22, so mine should be delivered in december so maybe ill get a radar car?

Our M3 SR+is due between November 20 - December 6

Hoping all is present and correct by then.

Gassing Station | Tesla | Top of Page | What's New | My Stuff